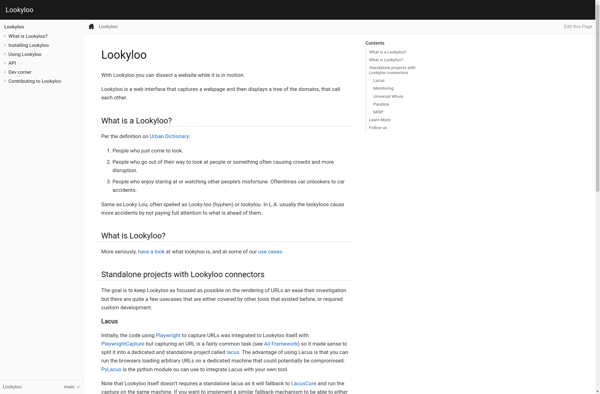

Description: Lookyloo is an open source web scanning framework designed for detecting and analyzing websites. It allows for easy crawling, scraping, and visualization of websites to identify security issues, track changes, and more.

Type: software

Pricing: Open Source

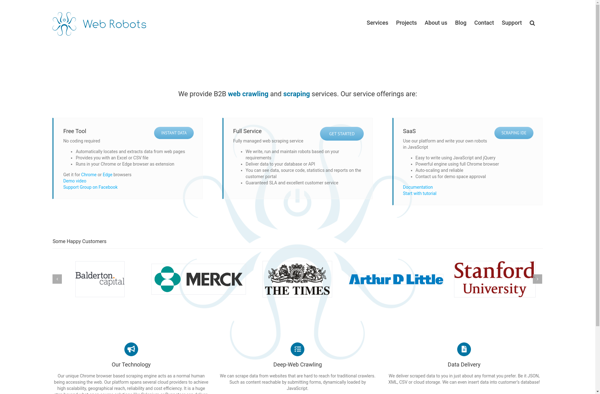

Description: Web robots, also called web crawlers or spiders, are programs that systematically browse the web to index web pages for search engines. They crawl websites to gather information and store it in a searchable database.

Type: software