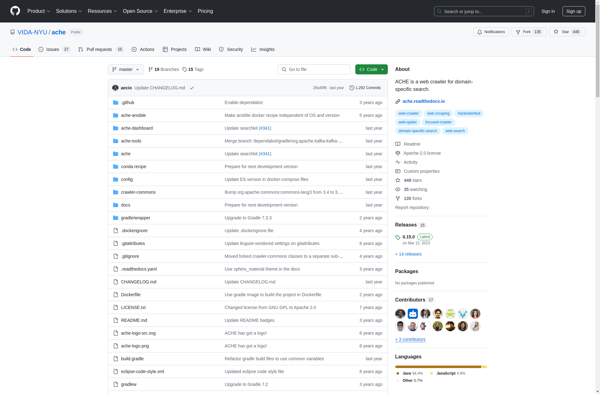

Description: ACHE Crawler is an open-source web crawler written in Java. It is designed to efficiently crawl large websites and collect structured data from them.

Type: software

Pricing: Open Source

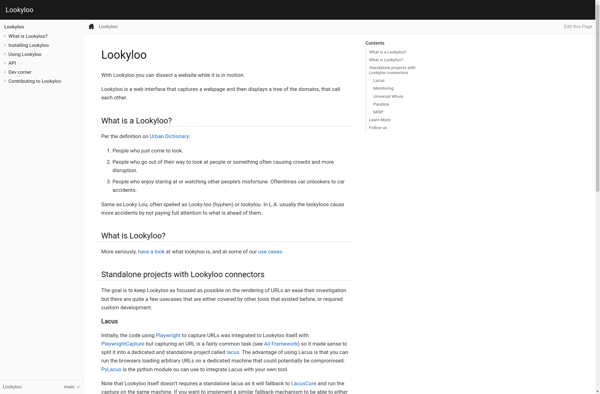

Description: Lookyloo is an open source web scanning framework designed for detecting and analyzing websites. It allows for easy crawling, scraping, and visualization of websites to identify security issues, track changes, and more.

Type: software

Pricing: Open Source